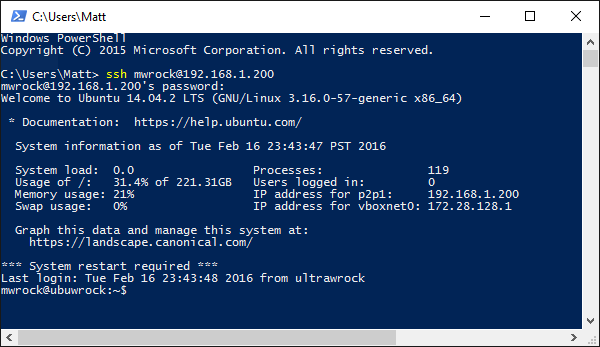

This week the WinRM ruby gem version 1.8.0 released adding support for certificate authentication. Many thanks to the contributions of @jfhutchi and @fgimenezm that make this possible. As I set out to test this feature, I explored how certificate authentication works in winrm using native windows tools like powershell remoting. My primary takeaway was that it was not at all straightforward to setup. If you have worked with similar authentication setups on linux using SSH commands, be prepared for more friction. Most of this is simply due to the lack of documentation and google results (well now there is one more). Regardless, I still think that once setup, authentication via certificates is a very good thing and many are not aware that this is available in WinRM.

This post will walk through how to configure certificate authentication, enumerate some of the "gotchas" and pitfalls one may encounter along the way and then explain how to use certificate authentication using Powershell Remoting as well as via the WinRM ruby gem which opens up the possibility of authenticating from a linux client to a Windows WinRM endpoint.

Why should I care about certificate based authentication?

First lets examine why certificate authentication has value. What's wrong with usernames and passwords? In short, certificates are more secure. I'm not going to go too deep here but here are a few points to consider:

Passwords can be obtained via brute force. You can protect against this by having longer, more complex passwords and changing them frequently. Very few actually do that, and even if you do, a complex password is way easier to break than a certificate. Its nearly impossible to brute force a private key of sufficient strength.

Sensitive data is not being transferred over the wire. You are sending a public key and if that falls into the wrong hands, no harm done.

There is a stronger trail of trust establishing that the person who is seeking authentication is in fact who they say they are given the multi layered process of having a generated certificate signed by a trusted certificate authority.

Its still important to remember that nothing may be able to protect us from sophisticated aliens or time traveling humans from the future. No means of security is impenetrable.

Not as convenient as SSH keys

So one reason some like to use certificates over passwords in SSH scenarios is ease of use. There is a one time setup "cost" of sending your public key to the remote server, but:

SSH provides command line tools that make this fairly straight forward to setup from your local environment.

Once setup, you just need to initiate an ssh session to the remote server and you don't have to hassle with entering a password.

Now the underlying cryptographic and authentication technology is no different using winrm, but both the initial setup and the "day to day" use of using the certificate to login is more burdensome. The details of why will become apparent throughout this post.

One important thing to consider though is that while winrm certificate authentication may be more burdensome, I don't think the primary use case is for user interactive login sessions (although that's too bad). In the case of automated services that need to interact with remote machines, these "burdens" simply need to be part of the connection automation and its just a non sentient cpu that does the suffering. Lets just hope they won't hold a grudge once sentience is obtained.

High level certificate authentication configuration overview

Here is a run down of what is involved to get everything setup for certificate authentication:

Configure SSL connectivity to winrm on the endpoint

Generate a user certificate used for authentication

Enable Certificate authentication on the endpoint. Its disabled by default for server auth and enabled on the client side.

Add the user certificate and its issuing CA certificate to the certificate store of the endpoint

Create a user mapping in winrm with the thumbprint of the issuing certificate on the endpoint.

I'll walk through each of these steps here. When the above five steps are complete, you should be able to connect via certificate authentication using powershell remoting or using the ruby or python open source winrm libraries.

Setting up the SSL winrm listener

If you are using certificate authentication, you must use a https winrm endpoint. Attempts to authenicate with a certificate using http endpoints will fail. You can setup SSL on the endpoint with:

$ip="192.168.137.169" # your ip might be different

$c = New-SelfSignedCertificate -DnsName $ip `

-CertStoreLocation cert:\LocalMachine\My

winrm create winrm/config/Listener?Address=*+Transport=HTTPS "@{Hostname=`"$ip`";CertificateThumbprint=`"$($c.ThumbPrint)`"}"

netsh advfirewall firewall add rule name="WinRM-HTTPS" dir=in localport=5986 protocol=TCP action=allow

Generating a client certificate

Client certificates have two key requirements:

An Extended Key Usage of Client Authentication

A Subject Alternative Name with the UPN of the user.

Only ADCS certificates work from Windows 10/2012 R2 clients via powershell remoting

This was the step that I ended up spending the most time on. I continued to receive errors saying my certificate was malformed:

new-PSSession : The WinRM client cannot process the request. If you are using a machine certificate, it must contain a DNS name in the Subject Alternative Name extension or in the Subject Name field, and no UPN name. If you are using a user certificate, the Subject Alternative Name extension must contain a UPN name and must not contain a DNS name. Change the certificate structure and try the request again.

I was trying to authenticate from a windows 10 client using powershell remoting. I don't typically work or test in a domain environment and don't run an Active Directory Certificate Services authority. So I wanted to generate a certificate using either New-SelfSignedCertificate or OpenSSL.

In short here is the bump I hit: powershell remoting from a windows 10 or windows 2012 R2 client failed to authenticate with certificates generated from OpenSSL or New-SelfSignedCertificate. However these same certificates succeed to authenticate from windows 7 or windows 2008 R2. They only worked on Windows 10 and 2012 R2 if I used the ruby WinRM gem instead of powershell remoting. Note that while I tested on windows 10 and 2012 R2, I'm sure that windows 8.1 suffers the same issue. The only certificates I got to work on windows 10 and 2012 R2 via powershell remoting were created via an Active Directory Certificate Services Enterprise CA .

So unless I can find out otherwise, it seems that you must have access to an Enterprise root CA in Active Directory Certificate Services and have client certificates issued in order to use certificate authentication from powershell remoting on these later windows versions. If you are using ADCS, the stock client template should work.

Generating client certificates via OpenSSl

As stated above, certificates generated using OpenSSL or New-SelfSignedCertificate did not work using powershell remoting from windows 10 or 2012 R2. However, if you are using a previous version of windows or if you are using another client (like the ruby or python libraries), then these other non-ADCS methods will work fine and do not require the creation of a domain controller and certificate authority servers.

If you do not already have OpenSSL tools installed, you can get them via chocolatey:

cinst openssl.light -y

Then you can run the following powershell to generate a correctly formatted user certificate which was adapted from this bash script:

function New-ClientCertificate {

param([String]$username, [String]$basePath = ((Resolve-Parh .).Path))

$OPENSSL_CONF=[System.IO.Path]::GetTempFileName()

Set-Content -Path $OPENSSL_CONF -Value @"

distinguished_name = req_distinguished_name

[req_distinguished_name]

[v3_req_client]

extendedKeyUsage = clientAuth

subjectAltName = otherName:1.3.6.1.4.1.311.20.2.3;UTF8:$username@localhost

"@

$user_path = Join-Path $basePath user.pem

$key_path = Join-Path $basePath key.pem

$pfx_path = Join-Path $basePath user.pfx

openssl req -x509 -nodes -days 3650 -newkey rsa:2048 -out $user_path -outform PEM -keyout $key_path -subj "/CN=$username" -extensions v3_req_client 2>&1

openssl pkcs12 -export -in $user_path -inkey $key_path -out $pfx_path -passout pass: 2>&1

del $OPENSSL_CONF

}

This will output a certificate and private key file both in base64 .pem format and additionally a .pfx formatted file.

Generating client certificates via New-SelfSignedCertificate

If you are on windows 10 or server 2016, then you should have a more advanced version of the New-SelfSignedCertificate cmdlet - more advaced than what shipped with windows 2012 R2 and 8.1. Here is the command to generate the script:

New-SelfSignedCertificate -Type Custom `

-Container test* -Subject "CN=vagrant" `

-TextExtension @("2.5.29.37={text}1.3.6.1.5.5.7.3.2","2.5.29.17={text}upn=vagrant@localhost") `

-KeyUsage DigitalSignature,KeyEncipherment `

-KeyAlgorithm RSA `

-KeyLength 2048

This will add a certificate for a vagrant user to the personal LocalComputer folder in the certificate store.

Enable certificate authentication

This is perhaps the simplest step. By default, certificate authentication is enabled for clients and disabled for server. So you will need to enable it on the endpoint:

Set-Item -Path WSMan:\localhost\Service\Auth\Certificate -Value $true

Import the certificate to the appropriate certificate store locations

If you are using powershell remoting, the user certificate and its private key should be in the My directory of either the LocalMachine or the CurrentUser store on the client. If you are using a cross platform library like the ruby or python library, the cert does not need to be in the store at all on the client. However, regardless of client implementation, it must be added to the server certificate store.

Importing on the client

As stated above, this is necessary for powershell remoting clients. If you used ADCS or New-SelfSignedCertificate, then the generated certificate is added automatically. However if you used OpenSSL, you need to import the .pfx yourself:

Import-pfxCertificate -FilePath user.pfx `

-CertStoreLocation Cert:\LocalMachine\my

Importing on the server

There are two steps to timporting the certificate on the endpoint:

The issuing certificate must be present in the Trusted Root Certification Authorities of the LocalMachine store

The client certificate public key must be present in the Trusted People folder of the LocalMachine store

Depending on your setup, the issuing certificate may already be in the Trusted Root location. This is the certificate used to issue the client cert. If you are using your own enterprise certificate authority or a publicly valid CA cert, its likely you already have this in the trusted roots. If you used OpenSSL or New-SelfSignedCertificate then the user certificate was issued by itself and needs to be imported.

If you used OpenSSL, you already have the .pem public key. Otherwise you can export it:

Get-ChildItem cert:\LocalMachine\my\7C8DCBD5427AFEE6560F4AF524E325915F51172C | Export-Certificate -FilePath myexport.cer -Type Cert

This assumes that 7C8DCBD5427AFEE6560F4AF524E325915F51172C is the thumbprint of your issuing certificate. I guarantee that is an incorrect assumption.

Now import these on the endpoint:

Import-Certificate -FilePath .\myexport.cer `

-CertStoreLocation cert:\LocalMachine\root

Import-Certificate -FilePath .\myexport.cer `

-CertStoreLocation cert:\LocalMachine\TrustedPeople

Create the winrm user mapping

This will declare on the endpoint: given a issuing CA, which certificates to allow access. You can potentially add multiple entries for different users or use a wildcard. We'll just map our one user:

New-Item -Path WSMan:\localhost\ClientCertificate `

-Subject 'vagrant@localhost' `

-URI * `

-Issuer 7C8DCBD5427AFEE6560F4AF524E325915F51172C `

-Credential (Get-Credential) `

-Force

Again this assumes the issuing certificate thumbprint of the certificate that issued our user certificate is 7C8DCBD5427AFEE6560F4AF524E325915F51172C and we are allowing access to a local account called vagrant. Note that if your user certificate is self-signed, you would use the thumbprint of the user certificate itself.

Using certificate authentication

This completes the setup. Now we should actually be able to login remotely to the endpoint. I'll demonstrate this first using powershell remoting and then ruby.

Powershell remoting

C:\dev\WinRM [master]>Enter-PSSession -ComputerName 192.168.137.79 ` >> -CertificateThumbprint 7C8DCBD5427AFEE6560F4AF524E325915F51172C [192.168.137.79]: PS C:\Users\vagrant\Documents>

Ruby WinRM gem

C:\dev\WinRM [master +3 ~0 -0 !]> gem install winrm

WARNING: You don't have c:\users\matt\appdata\local\chefdk\gem\ruby\2.1.0\bin in your PATH,

gem executables will not run.

Successfully installed winrm-1.8.0

Parsing documentation for winrm-1.8.0

Done installing documentation for winrm after 0 seconds

1 gem installed

C:\dev\WinRM [master +3 ~0 -0 !]> irb

irb(main):001:0> require 'winrm'

=> true

irb(main):002:0> endpoint = 'https://192.168.137.169:5986/wsman'

=> "https://192.168.137.169:5986/wsman"

irb(main):003:0> puts WinRM::WinRMWebService.new(

irb(main):004:1* endpoint,

irb(main):005:1* :ssl,

irb(main):006:1* :client_cert => 'user.pem',

irb(main):007:1* :client_key => 'key.pem',

irb(main):008:1* :no_ssl_peer_verification => true

irb(main):009:1> ).create_executor.run_cmd('ipconfig').stdout

Windows IP Configuration

Ethernet adapter Ethernet:

Connection-specific DNS Suffix . : mshome.net

Link-local IPv6 Address . . . . . : fe80::6c3f:586a:bdc0:5b4c%12

IPv4 Address. . . . . . . . . . . : 192.168.137.169

Subnet Mask . . . . . . . . . . . : 255.255.255.0

Default Gateway . . . . . . . . . : 192.168.137.1

Tunnel adapter Local Area Connection* 12:

Connection-specific DNS Suffix . :

IPv6 Address. . . . . . . . . . . : 2001:0:5ef5:79fd:24bc:3d4c:3f57:7656

Link-local IPv6 Address . . . . . : fe80::24bc:3d4c:3f57:7656%14

Default Gateway . . . . . . . . . : ::

Tunnel adapter isatap.mshome.net:

Media State . . . . . . . . . . . : Media disconnected

Connection-specific DNS Suffix . : mshome.net

=> nil

irb(main):010:0>

Interested?

This functionality just became available in the winrm gem 1.8.0 this week. This gem is used by Vagrant, Chef and Test-Kitchen to connect to remote machines. However, none of these applications provide configuration options to make use of certificate authentication via winrm. My personal observation has been that nearly no one uses certificate authentication with winrm but that may be a false observation or a result of the fact that few no about this possibility.

If you are interested in using this in Chef, Vagrant or Test-Kitchen, please file an issue against their respective github repositories and make sure to @ mention me (@mwrock) and I'll see what I can do to plug this in or you can submit a PR yourself if so inclined.